|

|

2 years ago | |

|---|---|---|

| .. | ||

| audit | 2 years ago | |

| README.md | 2 years ago | |

| w2_day4_ex4_q3.png | 2 years ago | |

README.md

Training

Today we will learn how to train and evaluate a machine learning model. You'll learn how tochoose the right Machine Learning metric depending on the problem you are solving and to compute it. A metric gives an idea of how good the model performs. Depending on working on a classification problem or a regression problem the metrics considered are different. It is important to understand that all metrics are just metrics, not the truth.

We will focus on the most important metrics:

- Regression:

- R2, Mean Square Error, Mean Absolute Error

- Classification:

- F1 score, accuracy, precision, recall and AUC scores. Even if it not considered as a metric, the confusion matrix is always useful to understand the model performance.

Warning: Imbalanced data set

Let us assume we are predicting a rare event that occurs less than 2% of the time. Having a model that scores a good accuracy is easy, it doesn't have to be "smart", all it has to do is to always predict the majority class. Depending on the problem it can be disastrous. For example, working with real life data, breast cancer prediction is an imbalanced problem where predicting the majority leads to disastrous consequences. That is why metrics as AUC are useful. Before to compute the metrics, read carefully this article to understand the role of these metrics.

You'll learn to train other types of Machine Learning models than linear regression and logistic regression. You're not supposed to spend time understanding the theory. I recommend to do that during the projects. Today, read the Scikit-learn documentation to have a basic understanding of the models you use. Focus on how to use correctly those Machine Learning models with Scikit-learn.

You'll also learn what is a grid-search and how to use it to train your machine learning models.

Exercises of the day

- Exercise 0: Environment and libraries

- Exercise 1: MSE Scikit-learn

- Exercise 2: Accuracy Scikit-learn

- Exercise 3: Regression

- Exercise 4: Classification

- Exercise 5: Machine Learning models

- Exercise 6: Grid Search

Virtual Environment

- Python 3.x

- NumPy

- Pandas

- Matplotlib

- Scikit Learn

- Jupyter or JupyterLab

Version of Scikit Learn I used to do the exercises: 0.22. I suggest to use the most recent one. Scikit Learn 1.0 is finally available after ... 14 years.

Resources

Metrics

Imbalance datasets

Gridsearch

Exercise 0: Environment and libraries

The goal of this exercise is to set up the Python work environment with the required libraries.

Note: For each quest, your first exercice will be to set up the virtual environment with the required libraries.

I recommend to use:

- the last stable versions of Python.

- the virtual environment you're the most confortable with.

virtualenvandcondaare the most used in Data Science. - one of the most recents versions of the libraries required

- Create a virtual environment named

ex00, with a version of Python >=3.8, with the following libraries:pandas,numpy,jupyter,matplotlibandscikit-learn.

Exercise 1: MSE Scikit-learn

The goal of this exercise is to learn to use sklearn.metrics to compute the mean squared error (MSE).

- Compute the MSE using

sklearn.metricsony_trueandy_predbelow:

y_true = [91, 51, 2.5, 2, -5]

y_pred = [90, 48, 2, 2, -4]

Exercise 2: Accuracy Scikit-learn

The goal of this exercise is to learn to use sklearn.metrics to compute the accuracy.

- Compute the accuracy using

sklearn.metricsony_trueandy_predbelow:

y_pred = [0, 1, 0, 1, 0, 1, 0]

y_true = [0, 0, 1, 1, 1, 1, 0]

Exercise 3: Regression

The goal of this exercise is to learn to evaluate a machine learning model using many regression metrics.

Preliminary:

- Import California Housing data set and split it in a train set and a test set (10%). Fit a linear regression on the data set. The goal is focus on the metrics, that is why the code to fit the Linear Regression is given.

# imports

from sklearn.datasets import fetch_california_housing

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LinearRegression

from sklearn.preprocessing import StandardScaler

from sklearn.impute import SimpleImputer

from sklearn.pipeline import Pipeline

# data

housing = fetch_california_housing()

X, y = housing['data'], housing['target']

# split data train test

X_train, X_test, y_train, y_test = train_test_split(X,

y,

test_size=0.1,

shuffle=True,

random_state=13)

# pipeline

pipeline = [('imputer', SimpleImputer(strategy='median')),

('scaler', StandardScaler()),

('lr', LinearRegression())]

pipe = Pipeline(pipeline)

# fit

pipe.fit(X_train, y_train)

-

Predict on the train set and test set

-

Compute R2, Mean Square Error, Mean Absolute Error on both train and test set

Exercise 4: Classification

The goal of this exercise is to learn to evaluate a machine learning model using many classification metrics.

Preliminary:

- Import Breast Cancer data set and split it in a train set and a test set (20%). Fit a logistic regression on the data set. The goal is focus on the metrics, that is why the code to fit the logistic Regression is given.

from sklearn.linear_model import LogisticRegression

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

X , y = load_breast_cancer(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.20)

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

classifier = LogisticRegression()

classifier.fit(X_train_scaled, y_train)

-

Predict on the train set and test set

-

Compute F1, accuracy, precision, recall, roc_auc scores on the train set and test set. Print the confusion matrix on the test set results.

Note: AUC can only be computed on probabilities, not on classes.

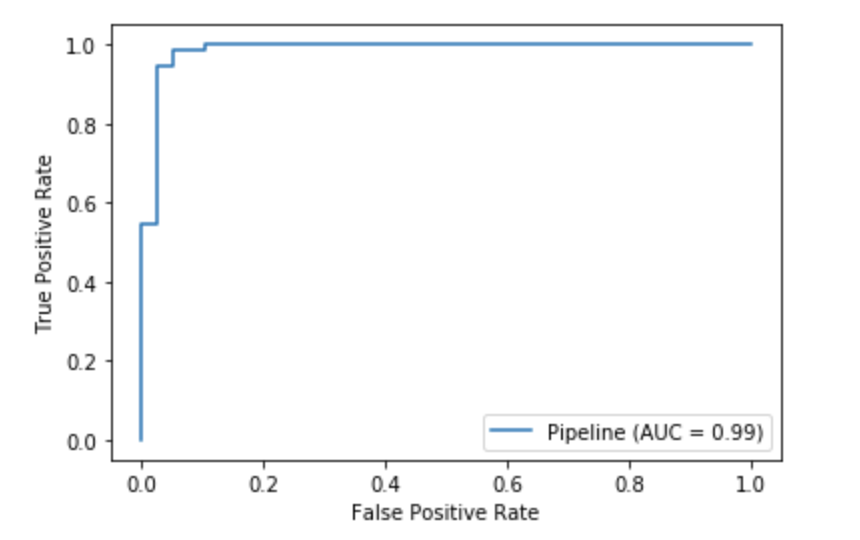

- Plot the AUC curve for on the test set using roc_curve of scikit learn. There many ways to create this plot. It should look like this:

Exercise 5: Machine Learning models

The goal of this exercise is to have an overview of the existing Machine Learning models and to learn to call them from scikit learn. We will focus on:

- SVM/SVC

- Decision Tree

- Random Forest (Ensemble learning)

- Gradient Boosting (Ensemble learning, Boosting techniques)

All these algorithms exist in two versions: regression and classification. Even if the logic is similar in both classification and regression, the loss function is specific to each case.

It is really easy to get lost among all the existing algorithms. This article is very useful to have a clear overview of the models and to understand which algorithm use and when. https://towardsdatascience.com/how-to-choose-the-right-machine-learning-algorithm-for-your-application-1e36c32400b9

Preliminary:

- Import California Housing data set and split it in a train set and a test set (10%). Fit a linear regression on the data set. The goal is to focus on the metrics, that is why the code to fit the Linear Regression is given.

# imports

from sklearn.datasets import fetch_california_housing

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LinearRegression

from sklearn.preprocessing import StandardScaler

from sklearn.impute import SimpleImputer

from sklearn.pipeline import Pipeline

# data

housing = fetch_california_housing()

X, y = housing['data'], housing['target']

# split data train test

X_train, X_test, y_train, y_test = train_test_split(X,

y,

test_size=0.1,

shuffle=True,

random_state=43)

# pipeline

pipeline = [('imputer', SimpleImputer(strategy='median')),

('scaler', StandardScaler()),

('lr', LinearRegression())]

pipe = Pipeline(pipeline)

# fit

pipe.fit(X_train, y_train)

- Create 5 pipelines with 5 different models as final estimator (keep the imputer and scaler unchanged):

- Linear Regression

- SVM

- Decision Tree (set

random_state=43) - Random Forest (set

random_state=43) - Gradient Boosting (set

random_state=43)

Take time to have basic understanding of the role of the basic hyperparameter and their default value.

- For each algorithm, print the R2, MSE and MAE on both train set and test set.

Exercise 6: Grid Search

The goal of this exercise is to learn how to make an exhaustive search over specified parameter values for an estimator. This is very useful because the hyperparameter which are the parameters of the model impact the performance of the model.

The scikit learn object that runs the Grid Search is called GridSearchCV. We will learn tomorrow about the cross validation. For now, let us set the parameter cv to [(np.arange(18576), np.arange(18576,20640))].

This means that GridSearchCV splits the data set in a train and test set.

Preliminary:

- Load the California Housing data set. As precised, this time, there's no need to split the data set in train set and test set since GridSearchCV does it.

You will have to run a Grid Search on the Random Forest on at least the hyperparameter that are mentioned below. It doesn't mean these are the only hyperparameter of the model. If possible, try at least 3 different values for each hyperparameter.

- Run a Grid Search with

n_jobsset to-1to parallelize the computations on all CPUs. The hyperparameter to change are: n_estimators, max_depth, min_samples_leaf. It may take

Now, let us analyse the grid search's results in order to select the best model.

-

Write a function that takes as input the Grid Search object and that returns the best model fitted, the best set of hyperparameter and the associated score:

def select_model_verbose(gs): return trained_model, best_params, best_score -

Use the trained model to predict on a new point:

new_point = np.array([[3.2031, 52., 5.47761194, 1.07960199, 910., 2.26368159, 37.85, -122.26]])

How do we know the best model returned by GridSearchCV is good enough and stable ? That is what we will learn tomorrow !

WARNING: Some combinations of hyper parameters are not possible. For example using the SVM, the kernel linear has no parameter gamma.

Note:

- GridSearchCV can also take a Pipeline instead of a Machine Learning model. It is useful to combine some Imputers or Dimension reduction techniques with some Machine Learning models in the same Pipeline.

- It may be useful to check on Kaggle if some Kagglers share their Grid Searches.

Ressources:

-

https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.GridSearchCV.html

-

https://stackoverflow.com/questions/38555650/try-multiple-estimator-in-one-grid-search

-

https://medium.com/fintechexplained/what-is-grid-search-c01fe886ef0a

-

https://scikit-learn.org/stable/auto_examples/model_selection/plot_grid_search_digits.html